AI and Judgement

·

2026

AI and strategic judgment: Where it helps, where it misleads

AI is best understood as a capability for language processing and pattern recognition. It is fluent. It is fast. And it can be surprisingly useful at turning disorder into structure. This makes it attractive in strategy work, where executives are overwhelmed by inputs and constantly asked to form views under time pressure.

But the same fluency that makes AI useful also makes it risky. A model can produce a coherent explanation of almost anything. It can make uncertainty feel manageable by giving it shape. It can generate an answer that reads like the conclusion of a careful analysis, even when the underlying basis is thin, mixed, or nonexistent. In other words, it can manufacture confidence.

So the question is not “Should we use AI?” Most organizations will if they are not already using it. The question is: Where does it tighten decision logic, and where does it degrade it? If you cannot answer that, then you are letting a tool quietly rewrite your standards for what counts as knowing.

Fill in the details and get access to the full article.

HELPS — When AI Strengthens Strategic Work (When Bounded) | MISLEADS — When AI Degrades Judgment (If Unchecked) |

Synthesis across messy inputs AI can turn transcripts, notes, emails, customer feedback, and memos into a structured brief—surfacing themes, tensions, recurring claims, and unanswered questions. This is valuable when AI is used for triage and organization, not adjudication. | Plausibility masquerading as truth AI can produce fluent, confident answers that sound rigorous but are evidentially empty. When teams treat linguistic coherence as analysis, they quietly lower their standards for knowing. |

Generating alternative hypotheses AI can be tasked to produce competing explanations of the same situation (“If demand is weakening, what else could explain the data?”), expanding the option set before leaders commit to a single story. | Narrative smoothing that erases uncertainty Strategy requires sharp edges—disagreement, conditionality, and unresolved tension. AI tends to smooth those edges into coherent narratives, reducing productive conflict and overstating consistency. |

Red teaming and pre-mortems Used properly, AI can act as an adversarial reviewer (“Assume the plan fails in 12 months—why?”). The value is not correctness, but forcing exposure of brittleness and second-order risks. | Hidden premise injection AI often fills gaps by assuming what it needs to assume to make an answer coherent. When those premises enter invisibly, teams lose control of their own reasoning. |

Structured comparison of options AI can help draft consistent comparisons across options—criteria, trade-offs, reversibility, risks, and second-order effects—while humans retain control over criteria and weighting. | Over-weighting what is common or recent AI outputs often reflect what is frequent, popular, or easily retrieved, biasing judgment toward conventional wisdom and recent noise over disconfirming or specialized evidence. |

Drafting decision records and assumption registers AI is effective at producing first drafts of structured artifacts—decision statements, assumptions, indicators, triggers, and open questions—precisely the work that prevents drift later. | False precision in numbers, timelines, and confidence AI can generate crisp estimates that look analytical but are not. In strategy, false precision is worse than uncertainty because it drives premature commitments. |

In summary, AI is most helpful when it provides structure and options. And it misleads most when it produces conclusions that feel warranted but are not traceable.

Plausibility is not evidence.

AI’s outputs often feel convincing because they are coherent. Humans are wired to equate coherence with truth. If something is well-structured, uses familiar causal language, and addresses the question directly, we instinctively treat it as credible. That instinct is dangerous here.

A plausible statement could be true. Evidence is information that makes a claim more likely to be true than it would be otherwise, and can be traced to a source you can interrogate.

AI is exceptionally good at plausibility. It can generate:

Smooth causal chains (“X led to Y because…”)

Balanced lists of pros and cons

Reasonable-sounding analogies and historical parallels

Crisp summaries that imply completeness

None of that, however, is evidence.

Evidence has properties that plausibility lacks: provenance, specificity, falsifiability, and the ability to withstand scrutiny. Evidence can be checked. It can be weighed and contradicted. A plausible narrative can glide past scrutiny because it feels right.

This is why AI can manufacture confidence. It turns ambiguity into an articulate explanation. It reduces the discomfort typically associated with uncertainty. And if leaders are tired, time-starved, or under pressure to “have an answer,” the organization may accept plausibility as proof.

The antidote is not cynicism; it is discipline: require clear separation between (a) what is asserted as fact with a source, (b) what is inferred, and (c) what is hypothesized as a possibility. If you blur those categories, AI will also blur them for you.

Where AI helps, bounded: The “right shape” of tasks

The safest way to utilize AI in strategic judgment is to limit its application to tasks where its strengths are relevant and its weaknesses are manageable.

AI is strongest when the work is:

Text-heavy and messy (notes, reports, interviews)

Comparative (options, criteria, trade-offs)

Exploratory (hypotheses, alternative frames)

Procedural (drafting structured artifacts)

AI is weakest when the work requires:

Ground-truth validation (what is actually true right now)

Context-specific tacit knowledge (how your regulator behaves, how your customer actually buys)

Numerical rigor (probabilities, forecasts, valuations)

Accountability (who takes responsibility for the claim)

This is not philosophical; it is operational. Put AI where it adds leverage without being asked to do what it cannot do reliably.

The governance issue: Who owns the logic?

The most important governance question is not “Who owns the tool?” but“Who owns the reasoning?”

In an AI-assisted environment, it becomes easy for logic to become orphaned. A document is produced, a recommendation appears, and the output is circulated. But when someone asks, “Why do we believe this?” there is no clear chain of custody: Which claims are sourced, which assumptions were made, and which human is accountable for the final judgment?

This is where organizations get into trouble. Not because AI is malicious, but because it makes it easy to move fast without preserving the rationale. Strategy becomes a sequence of outputs rather than a sequence of decisions with explicit premises.

A workable governance model must answer three questions:

What must be documented? (so reasoning is auditable)

Who approves what? (so accountability is clear)

How are assumptions tracked over time? (so drift is detected early)

You do not need a heavy committee. You need ownership and a minimal standard of evidence.

Lightweight governance model (pragmatic, not bureaucratic)

Below is a governance model that is both lightweight and disciplined enough to matter.

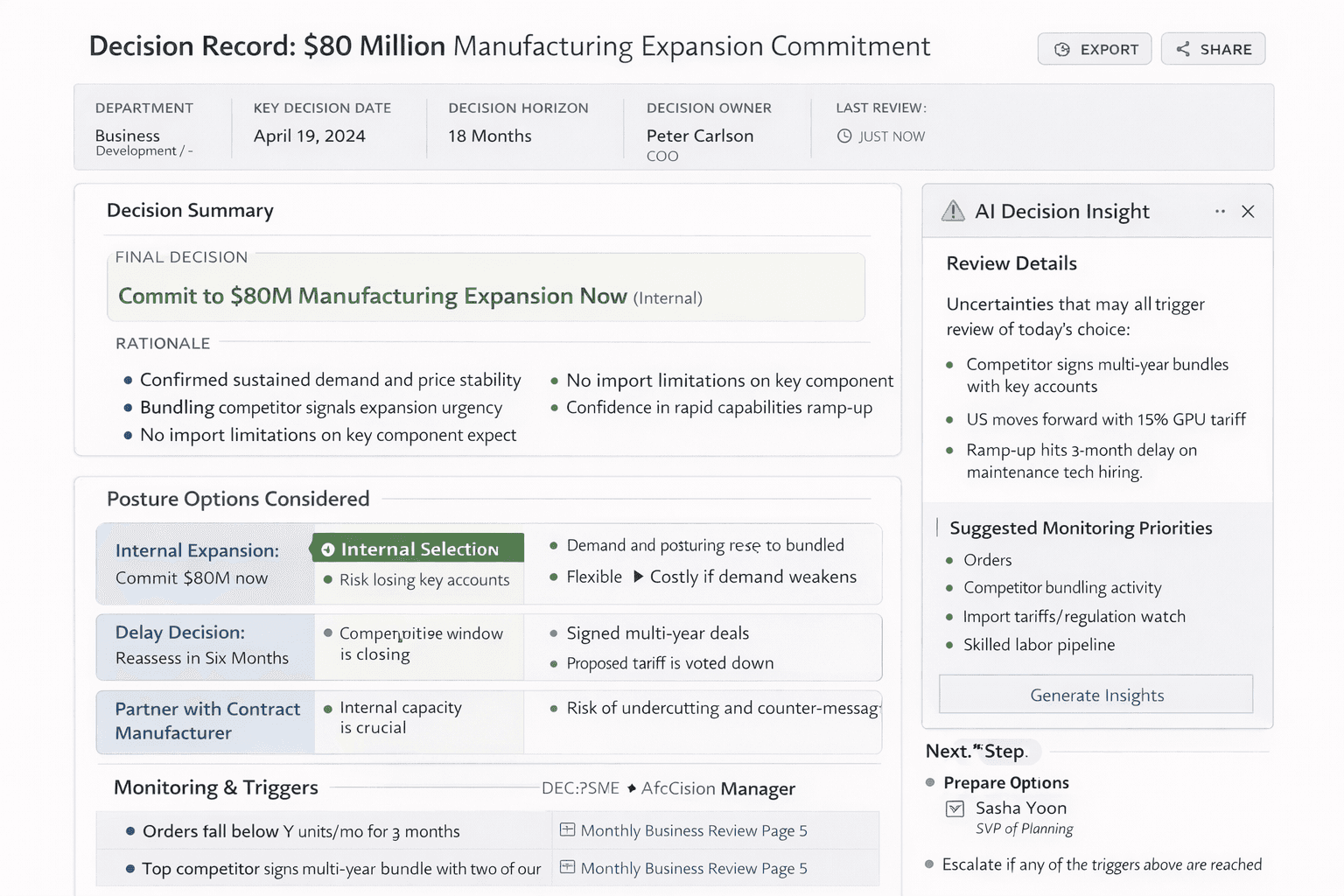

What gets documented (minimum viable record)

For any material decision where AI contributes to the reasoning, document:

Decision statement: What is being decided and by when, and what is the time horizon?

Options considered: At least two plausible alternatives.

Key claims and evidence: Claims tagged as fact (with source), inference (reasoned from facts), or hypothesis (possible explanation).

Assumption register: 3–7 load-bearing assumptions that must be true for the chosen option to work.

Indicators and triggers: What will be monitored, and what thresholds will trigger a review?

AI contribution log: What AI was used for (synthesis, hypothesis generation, red team), and where its output appears in the record.

It doesn’t need to be long. It needs to be explicit.

Who approves (clear roles)

Decision owner (accountable): The executive who owns the decision and signs the record. This person cannot delegate accountability to the tool or to a team.

Evidence steward (responsible): A person (often in strategy, research, or analytics) responsible for verifying sources and tagging claims. (fact/inference/hypothesis), and ensuring that citations exist where required.

Operational owner(s) for triggers (responsible): The people who monitor specific indicators and report when thresholds are crossed.

Approval is simple: The decision owner signs the final decision record after the evidence steward confirms that it meets the standards. If it’s a high-stakes decision, require a second sign-off from a peer executive who is incentivized to disagree (built-in red team role).

How assumptions are tracked (so the plan doesn’t drift)

Store assumptions in a living register tied to the decision record.

Assign an owner and review cadence for each indicator (monthly/quarterly/event-driven).

When a trigger is hit, require a brief “decision review note:” What changed, whether the assumption still holds, and whether posture has changed.

This is not about bureaucracy. It is about preventing silent drift and preserving institutional memory.

Example: AI-assisted decision record (what it looks like)

“Decision: Enter the mid-market segment in Q3 via a partner-led offering versus direct sales. Options considered: (A) direct entry with new sales team, (B) partner-led entry, (C) delay entry six months. AI used for: synthesis of 27 customer interviews and drafting alternative hypotheses about slow pipeline conversion; red team of partner risks. Key facts (sourced): Interview themes indicate that procurement cycles average 4–6 months; partner X has existing distribution in three target regions. Inferences: partner-led entry reduces time to revenue, but constrains pricing control. Assumptions (load-bearing): partner incentives will align for 12 months; integration can be completed by June 30; the competitor will not lock key accounts with exclusivity. Indicators/triggers: If integration milestones slip by more than 30 days, revisit option C. If the partner-sourced pipeline falls below $Y by August 15, revisit option A. If competitor exclusivity appears in more than two top accounts, convene a review within 10 business days. Ownership: VP Partnerships owns partner pipeline indicator; CTO owns integration milestones; Head of Sales owns competitive account signals. Approval: decision owner (COO) approved; evidence steward (strategy lead) verified sources and tagging.

Notice what is absent – any claim that AI “recommended” the decision. AI helped structure inputs and stress test assumptions. A human owned the logic, the evidence, and the triggers.

Practical operating rules that keep AI useful

If you adopt AI into strategic work, you need explicit operating rules that are enforceable.

Define allowed uses.

Decide where AI is permitted (synthesis, drafting, hypothesis generation, red team) and where it is not (final factual assertions without sources, probability estimates presented as numbers, regulatory interpretations without counsel).Require citations for factual claims.

If a claim is presented as fact, it must have a source. If it cannot be sourced, it must be tagged as an inference or hypothesis. This single rule eliminates many instances of manufactured confidence.Separate inference from fact—visibly.

Use tags. Make it impossible to confuse what is known with what is reasoned.Preserve rationale in a decision record.

The decision record is not paperwork; it is the audit trail that prevents revisionist history and helps the organization move quickly later.Make assumptions explicit and monitorable.

If the plan depends on assumptions that you cannot monitor, your strategy is fragile. AI should help you surface assumptions, not hide them.

Simple workflow: AI assists → human adjudicates → record preserved → triggers set

This is the operating rhythm that works:

AI assists with synthesis, option comparison, alternative hypothesis generation, and red-team prompts.

Human beings adjudicate facts, weigh trade-offs, decide criteria, and own the final judgment.

The decision record is preserved with tagged claims, sources, assumptions, and rationale.

Monitoring triggers are set with thresholds and owners, so posture can change early and cleanly.

You can run this workflow in a week. You can also run it in a day for urgent decisions, as long as you keep the discipline.

Conclusion: AI should tighten decision logic, not replace it

AI can strengthen strategic judgment if you use it to do what strategy teams often fail to do under pressure: broaden hypotheses, stress test assumptions, document rationale, and define triggers before commitments become irreversible.

It will mislead you if you let it substitute plausibility for evidence, smooth conflict into narrative comfort, or inject hidden premises into your reasoning. The failure mode is subtle: You will feel more informed while becoming less rigorous.

Use AI to tighten the logic. Keep human beings accountable for what is true, what is assumed, and what will force a change in posture. That is the standard worth defending.