Decision Architecture

·

2026

The hidden cost of inheriting someone else’s decision logic

Organizations often think they are buying tools. In reality, they are often buying embedded decision logic: categories, defaults, workflows, escalation rules, metrics, and implicit assumptions about what matters. These embedded structures shape what is visible and what is ignored. They influence what is treated as “evidence,” what is escalated, and what is quietly normalized. Over time, they can become the organization’s strategy—not in the form of an explicit plan, but as a silent operating logic that governs how choices are made.

This is why technology decisions can be strategic risk decisions, even when they are framed as procurement or productivity initiatives. When an organization inherits someone else’s model of the world, it can lose the ability to audit its own reasoning. It can become difficult to explain why a posture was adopted, why an alternative was dismissed, or what would need to change for the organization to update its course of action. The cost is not only lock-in, but also fragility: the slow drift toward decisions that feel rational within the tool’s frame but are misaligned with the organization’s true risk posture and operating reality.

This piece is about maintaining sovereignty over judgment, while still adopting platforms and capabilities. It focuses on what leaders should insist on: traceable rationale, explicit assumptions, portability of decision records, and governance over the categories and thresholds that shape action. Tools can be valuable. Outsourcing your decision logic is rarely worth the price.

Fill in the details and get access to the full article.

Opening: Buying a platform often means buying embedded categories, defaults, and escalation logic.

Every platform is a theory of the world. It comes with baked-in definitions: what an “issue” is, what a “risk” is, what a “case” is, what “priority” means, what constitutes a resolution, what is considered compliant, and what is considered urgent. It also comes with mechanics: how work is routed, how exceptions are handled, what gets measured, what triggers escalation, and what disappears into status updates.

Procurement conversations tend to treat these definitions and mechanics as implementation details. They are not; they are governance decisions. When you adopt a platform at scale, you often adopt a vocabulary, a set of workflows, and a set of defaults that begin to govern your organization’s attention.

That governance is rarely explicit. It arrives quietly—through configuration screens, templates, standard fields, and “recommended best practices.” The vendor will describe it as “accelerators” and “out-of-the-box value.” You will describe it as “getting live quickly.” Meanwhile, the platform is shaping how your organization reasons.

The hidden cost is not that the platform is malicious. It is opinionated—and those opinions lead to decision logic.

Why this matters: Decision logic is strategy.

Most organizations say strategy is a plan: a set of goals, initiatives, and resource allocations. In practice, strategy is something more operational and less glamorous. It is the organization’s recurring logic for deciding what matters, what is urgent, what constitutes acceptable risk, what counts as evidence, and when to adjust its posture.

That recurring logic is what I mean by decision logic, which answers questions such as:

What categories do we use to name the world?

What variables do we track, and which do we ignore?

What thresholds trigger escalation?

Who is authorized to decide under uncertainty?

What is treated as an exception as opposed to a pattern?

What evidence is required to act?

What do we do when evidence conflicts?

What gets documented, and what is left as “tribal knowledge”?

Platforms quietly answer many of these questions. They do it through the categories available in drop-down menus. Through mandatory fields and optional fields. Through how priorities are calculated. Through what gets flagged. Through which workflows exist for escalation, approval, exception-handling, and audit.

If the platform’s embedded logic differs from your organization’s mandate, risk posture, or operating reality, you will not merely experience “some friction.” You will slowly get a different strategy than the one you initially thought you had, because the platform will shape what the organization sees and how it responds.

This is why “decision logic is strategy.” And why inheriting someone else’s decision logic can be a form of strategic drift.

The hidden costs of inherited logic

The costs show up in predictable ways. Most are not visible in a pilot, because pilots tend to be run by motivated teams under controlled conditions. The costs emerge, however, when the platform becomes the normal operating environment.

Lock-in that is deeper than data

Conventional lock-in is primarily about data migration: Can you export your data and move it elsewhere? The deeper lock-in is epistemic: You have trained your organization to see and act through a particular set of categories and workflows. Even if you move your data, you carry the habits.

If your risk management system only tracks risk in certain fields, you start managing it as the system defines it. If your case management system routes work using a certain prioritization, you start treating that prioritization as an objective. If your intelligence platform has a certain “confidence” scoring model, you start trusting that score—even when you cannot explain it.

Misalignment with values and mandate

Organizations maintain values and mandates that at times are explicit and at times are cultural: caution versus speed, centralized control versus distributed autonomy, transparency versus discretion, and formal evidence versus expert judgment. Platforms encode trade-offs across these dimensions.

A platform optimized for speed may normalize incomplete documentation. A platform optimized for compliance may slow decisions and push work into checklists. A platform optimized for workflow efficiency may penalize dissent and hinder exception handling.

If you adopt the platform without articulating your own priorities, you inherit the vendor’s priorities. And that is not neutral.

Opaque trade-offs and inability to audit

Many platforms, especially those incorporating automation and AI, make it difficult to answer basic governance questions: Why did this get escalated? Why was that deprioritized? Why did the system recommend this action? What evidence did it use? What assumptions did it make?

If you cannot audit the reasoning chain, you cannot own accountability. You may still be legally accountable, reputationally accountable, and operationally accountable—but you cannot interrogate the logic that drove outcomes.

Loss of institutional learning

Organizations learn when they can compare their intent to their outcomes. That requires preserving decision records: What was decided, why, what assumptions were in play, what signals were monitored, and what would have changed the decision.

Platforms often capture activity, not rationale. They record completed tasks, routed approvals, and status changes; they do not preserve the logic of judgment. Over time, the organization becomes operationally efficient, while becoming strategically amnesiac. It repeats mistakes, because it has no durable record of what it believed and why.

Brittle interoperability

Interoperability is often described as “integration.” But the brittleness is not only technical, but also conceptual. Two systems can exchange data and still miscommunicate because they categorize reality differently. One system’s “risk” is another system’s “issue.” One system’s “resolved” is another system’s “mitigated.” One system’s “priority” is another system’s “severity.”

When you inherit a platform’s ontology and then try to integrate it with others, you either distort your own categories to match the tool, or build complex translation layers that degrade meaning over time.

What you inherit when you buy a platform

It is useful to be explicit about what “embedded decision logic” typically includes. In my experience, buyers inherit four major layers of responsibility.

1) Categories and ontologies

These are the nouns of the system – the kinds of things that exist on the platform, their relationships, and how they are labeled. Categories drive what can and cannot be represented.

If your environment requires you to distinguish between “weak signals,” “anomalies,” “indicators,” and “confirmed events” but the platform gives you only “alerts” and “incidents,” you will compress meaning. That compression changes judgment.

2) Defaults and metrics

Defaults are not minor conveniences. They become policy and include things like:

Default priority scoring rules

Default SLAs

Default risk severity mapping

Default confidence scales

Default required fields and optional fields

Default templates for assessments and reports

If you accept defaults, you accept the vendor’s assumptions about what is normal. Most organizations never revisit them, and they become invisible governance.

3) Workflows and exception-handling

Workflows determine what happens next, including routing, approvals, handoffs, and escalations. They also decide what happens when things do not fit: exceptions, overrides, and “other.”

If creating exceptions is hard, people stop raising them. If overrides require too much friction, people accept the system’s output even when they doubt it. If the workflow privileges linear progression, complex problems get flattened into checkboxes.

4) Escalation logic and authority design

This is where decision logic becomes power. Platforms embed who can escalate, who can approve, and what conditions trigger escalation.

If escalation is threshold-based but thresholds are generic, you inherit a generic risk posture. If escalation is role-based but roles are misaligned to your accountability, you create gaps: The person who sees the signal cannot act, and the person who can act does not see the signal.

Escalation logic is not “workflow.” It is a statement about how the organization governs itself under uncertainty.

What “owning your logic” means

Owning your decision logic does not mean building everything yourself. It means refusing to let tools become the unexamined authors of your governance. In practical terms, “owning your logic” requires you to define four areas.

Decision model

What are the recurring decisions the organization must make? What inputs matter? What standards of evidence apply? What thresholds trigger posture review? How do you treat uncertainty?Assumption structure

What are the load-bearing assumptions behind key commitments? How are they documented? How are they monitored? What would falsify them?Evidence standards and auditability

What claims require citations? What constitutes acceptable evidence? What must be traceable? What is inference versus fact?Governance and update authority

Who can change thresholds? Who can change categories? Who owns the ontology? How are changes reviewed? How do you prevent silent drift?

A platform can either support or undermine these choices. The point is that these are your choices to make explicitly, not defaults to inherit.

Due diligence checklist: 12 questions a buyer should ask before locking in

If you are buying a platform that will govern how your organization sees and decides, your due diligence should be as serious as your financial diligence. Here are 12 practical questions.

What ontology does the platform assume, and can we inspect it?

Request to view the category model, which includes entities, relationships, required fields, and definitions.Which categories are fixed, and which are customizable without vendor involvement?

Customization that requires professional services is not customization; it is dependency.What are the default scoring, prioritization, and escalation rules?

Ask for the actual logic—not marketing descriptions. What triggers what?Can we change thresholds and triggers ourselves, and is there version control?

If thresholds change, can you track who changed them, when, and the reason for the change?How does the system handle exceptions and overrides?

How easy is it to raise an exception? Are overrides logged and reviewable?What does the platform preserve as a decision record versus an activity log?

Can it capture rationale, assumptions, evidence, and “what would change our mind,” or only tasks and time stamps?How does the platform separate fact from inference from hypothesis?

If it cannot, you will constantly confuse narrative with evidence.What auditability and traceability are available for automated outputs and recommendations?

If the system generates scores or recommendations, can you inspect the basis and the contributing factors?What is the export and portability model for both data and logic?

Can you export not only records, but also the ontology, workflows, thresholds, and decision logs in a usable form?How does the platform integrate with existing systems without collapsing in meaning?

Ask how it maps categories across tools and where semantic loss occurs.Who owns governance inside the vendor relationship, and what is the change process?

If the platform evolves, how are changes communicated? Can changes be sandboxed? Who approves updates that affect decision logic?What is the failure mode under stress—speed, outages, adversarial inputs, or uncertainty spikes?

How does the system behave when signals surge, when data is incomplete, or when inputs conflict? Platforms reveal their real logic under stress.

If a vendor cannot answer these questions clearly, you are being asked to buy a system you cannot fully govern.

A short example: Same tool, different outcomes—because logic and governance differ

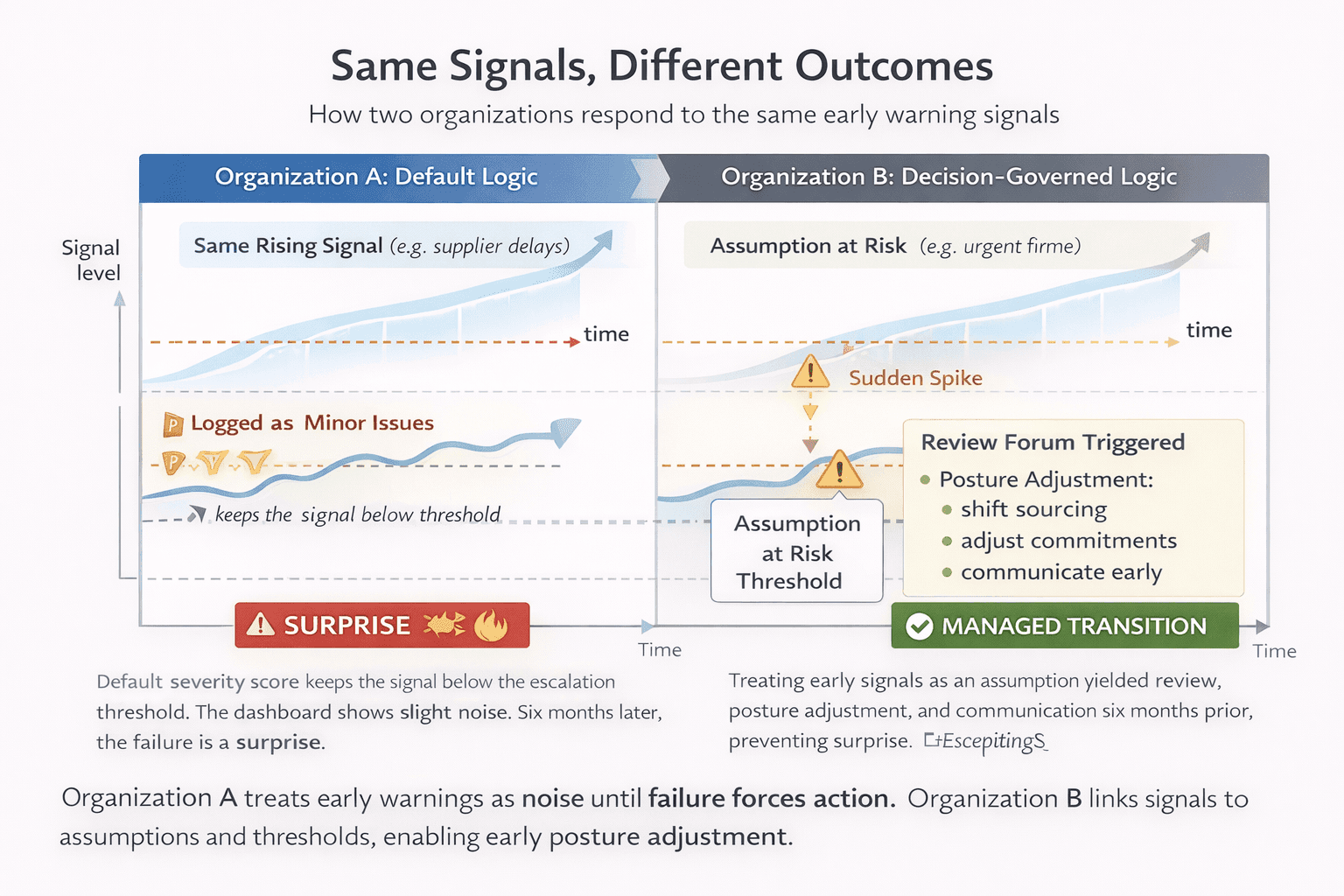

Consider two organizations adopting the same incident and risk management platform.

Organization A treats the platform as infrastructure. It accepts the default categories and severity scoring. Escalation thresholds are generic. Exceptions require senior approval and are culturally discouraged. The platform produces weekly dashboards with neat trends. Leaders feel informed.

Organization B treats the platform as a governance tool. Before rollout, it defines its decision logic: What constitutes a “weak signal” versus an “incident,” what assumptions underlie their risk posture, what evidence is required for escalation, and what triggers necessitate a posture review? They modify categories so that anomalies can be logged without being forced into “incident” language. They build a decision record template into the workflow. Threshold changes require sign-off and are versioned. Each indicator has an owner and a monthly review forum.

A year later, a supplier constraint begins to emerge. Early signals appear as small delivery delays and variations in quality.

In Organization A, these signals are logged as minor issues. The default severity score keeps them below the escalation threshold. The dashboard shows slight noise. Teams work around it. Six months later, the constraint becomes acute, forcing a scramble. Leadership describes it as a surprise.

In Organization B, the same early signals map to an “assumption at risk” category tied to a strategic commitment. The indicator crosses a threshold that triggers a review forum. The organization changes posture earlier—shifting sourcing, adjusting commitments, and communicating proactively with customers. The “surprise” is absorbed as a managed transition.

Same platform. Different outcomes. The difference is not the tool, but who owned the logic and whether governance was explicit.

Conclusion: You can adopt tools without outsourcing your judgment

Platforms can be valuable. They can create efficiency, consistency, and visibility. But they also carry embedded decision logic. If you adopt that logic unexamined, you gradually outsource parts of your judgment, especially in the gray zones where strategy actually lives.

The goal is not to reject platforms. It is sovereignty: to insist that the organization’s decision model, evidence standards, and escalation authority remain yours. Buy tools. Integrate capabilities. But do not inherit someone else’s logic by default.

You can adopt tools without outsourcing your judgment. The price is the discipline to ask the right questions before lock-in and the willingness to treat “configuration” as governance, not as an implementation detail.